Hello,

Is there a problem with any of the servers/vms? Since yesterday I have been unable to get FileZilla to transfer files from my local computer to my VM. This morning I cannot ssh to my VM as the action times out.

Thanks

Hayley

Hello,

Is there a problem with any of the servers/vms? Since yesterday I have been unable to get FileZilla to transfer files from my local computer to my VM. This morning I cannot ssh to my VM as the action times out.

Thanks

Hayley

Hi Hayley - I’m going to need a bit more information. Where is the vm? What is its name? Have you tried rebooting it via https://bryn.climb.ac.uk?

Hi Matt,

My vm IP is 147.188.173.127 and is called Hayley-GVL-2. No I haven’t tried that yet. I’ll do that now and see if it makes a difference.

Hayley

Hi Matt,

This has worked and I can now transfer files and access the VM. The only problem is all the data I had in my ‘data’ directory on my VM is gone. I have a bin directory where I was installing programs and they are all still there but in ‘data’ it is empty. There was a lot of data in there - do I need to do something with the volume after rebooting?

Hayley

Hi Hayley - you will need to mount your volume:

sudo mount /dev/sdb /home/ubuntu/data

OK - no problem. Its back! Thanks very much! I panicked a little for a second!

Is there a reason why the VM would need rebooting? Or is it just something that pops up now and again?

Difficult to say, but my first remedy is always “turn it off and on again” and see if that helps. It’s an easy, userspace action that can solve a multitude of problems and makes it easier for us!

Its good practice to reboot your VM every-so-often anyway (weekly would be great!). Your VM downloads and installs critical security updates automatically, but if the updates affect long-running services or the kernel, they won’t be upgraded until you reboot the VM. This is why Microsoft do all of their updates at shutdown or boot time.

Hi Matt,

Sorry to come back to this. I seem to still be having problems. Up until yesterday I could upload/download files to my VM from my laptop using Filezilla. I haven’t changed anything but now for some reason I can download files from my VM but I cannot upload anything from my laptop - i.e sequencing files to process. I started with directories but then wondered if they were too big so used single files and these will not upload either.

Have I exceeded my memory? I couldn’t find where to check this. Or is there a network issue? I’ve tried the reboot and reattach volume, shut down my laptop, tried using Cyberduck (same result - files will not transfer to VM/volume). I’m at a bit of a loss now.

I have also contacted my IT department to see if it is a problem their end. But just trying to cover all options

thanks

Hayley

No worries coming back - please post if you have any problems, we’re here to help!

Again, I’m afraid I need a bit more information to troubleshoot this effectively, because the instance looks good from my side.

Where is the problem?

What is your network setup?

Thanks Matt. I can connect to the instance and see files and work on them. I can download them from climb to my laptop but not upload.

There are error messages. I’m away until Monday so will have to send you them then.

I have tried via Ethernet to my uni network and also eduroam via WiFi.

Thanks

Hi Matt,

Here is a screen shot of the errors - I get about a third of the way through the transfer (I’m moving Oxford nanopore fast5 files so there are ALOT of them) and this is happening. I’ve had this when I try to upload the whole lot in one go or when I have tried in batches. It always seems to occur once a similar number of files have been transferred. Am I running out of space?

Great, the screenshots are helpful, thanks!

As you can see from the error message, it looks like you’re trying to upload a file that you’ve already uploaded, and FileZilla is prompting you to decide whether you want to overwrite the destination file. Normally this would be fine, you could just overwrite or not, but the destination filesize is detected as 0 bytes, which is a bit concerning.

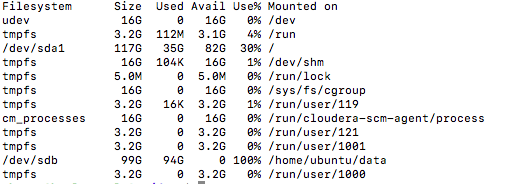

It looks like you’ve made and attached a couple of volumes to this instance, but they’re only 100GB in size, which isn’t very big if you’re doing a couple of analyses in there. Could you check that you haven’t filled the disk that you’re trying to write to using:

df -h

If its 100% full, I’ll help you extend the volume to a couple of TB, which should give you enough space to work with.

Hi Matt,

Thanks for the quick reply. Screenshot of df -h:

Think I’ve filled it up…

So once I’ve used the fast5 files to create an improved assembly I can delete them from my volume - they don’t need to stay, just need to be able to get them in to use them then I can get rid.

Yeah, that’s full!

No need to delete anything, we can give you loads more space…

I’ll send you a PM now to work out the specifics.

Brilliant! Thanks very much!